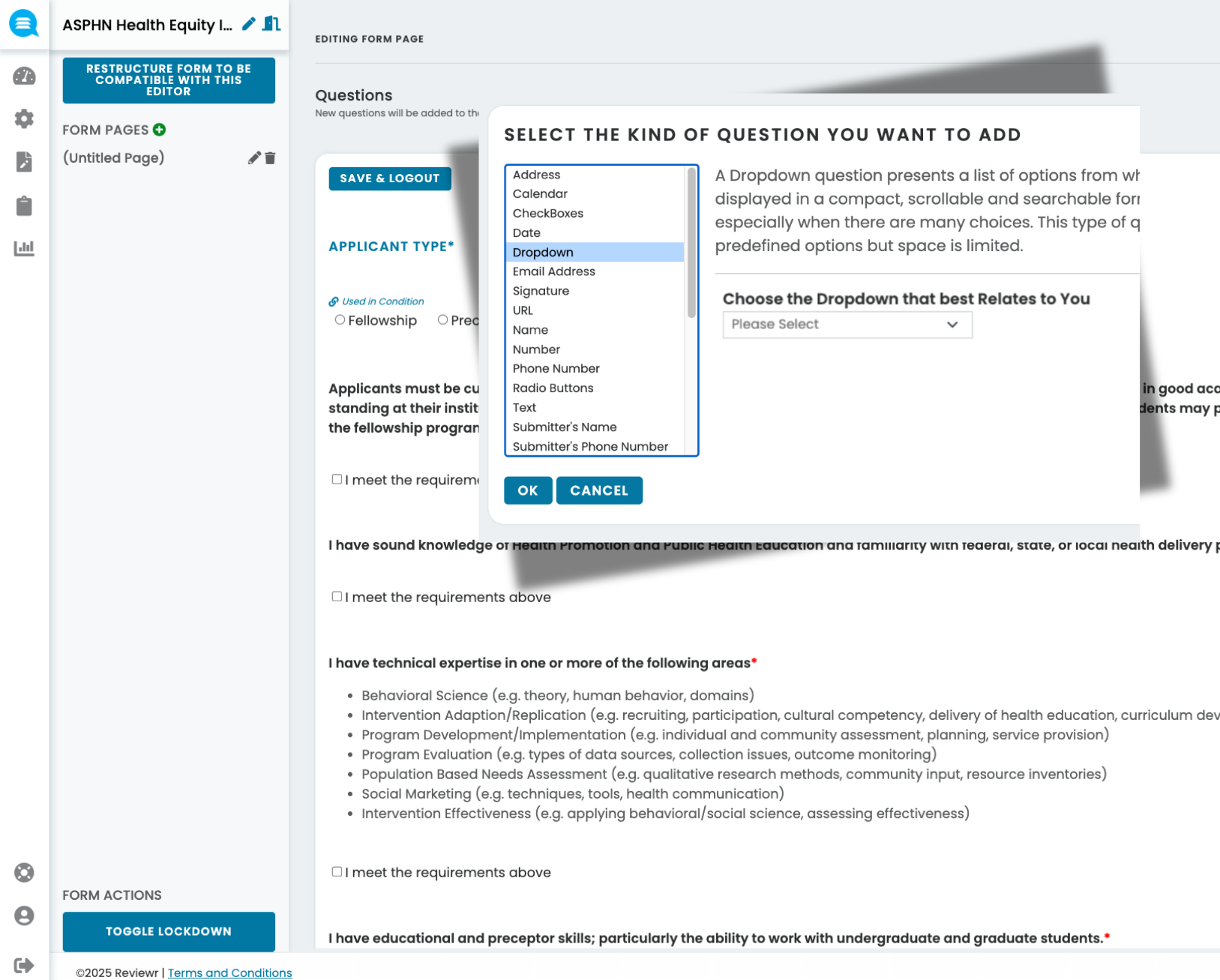

Give submitters the experience your program deserves — with structured forms, co-author management, and complete validation that ensures every entry arrives ready to review.

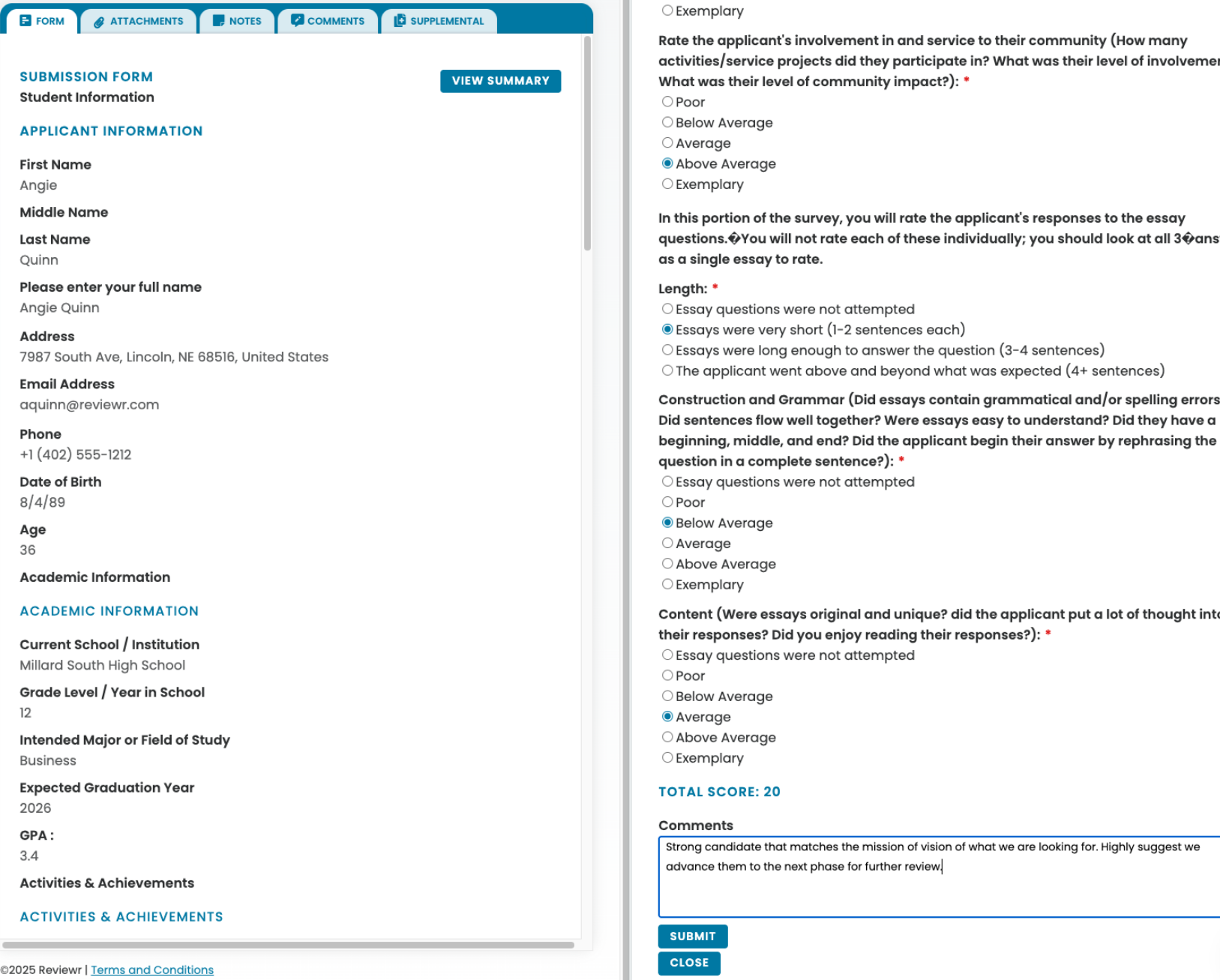

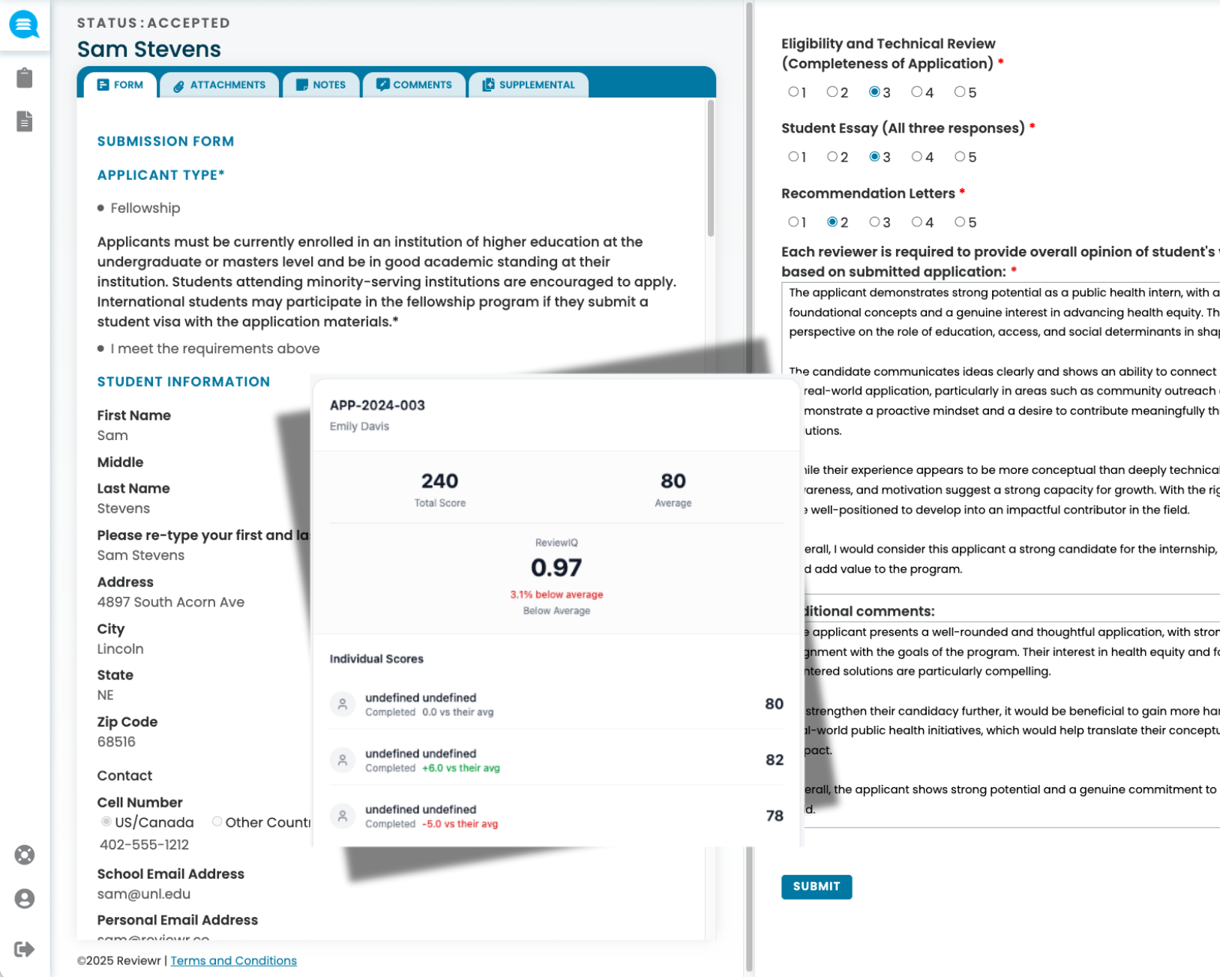

Give your review committee the structure they need — with blind review, conflict disclosure, and score normalization that makes every evaluation fair and defensible.

From reviewer scores to final decisions to post-selection deliverables — all in one connected workflow that keeps everyone informed and nothing falling through.

.png)

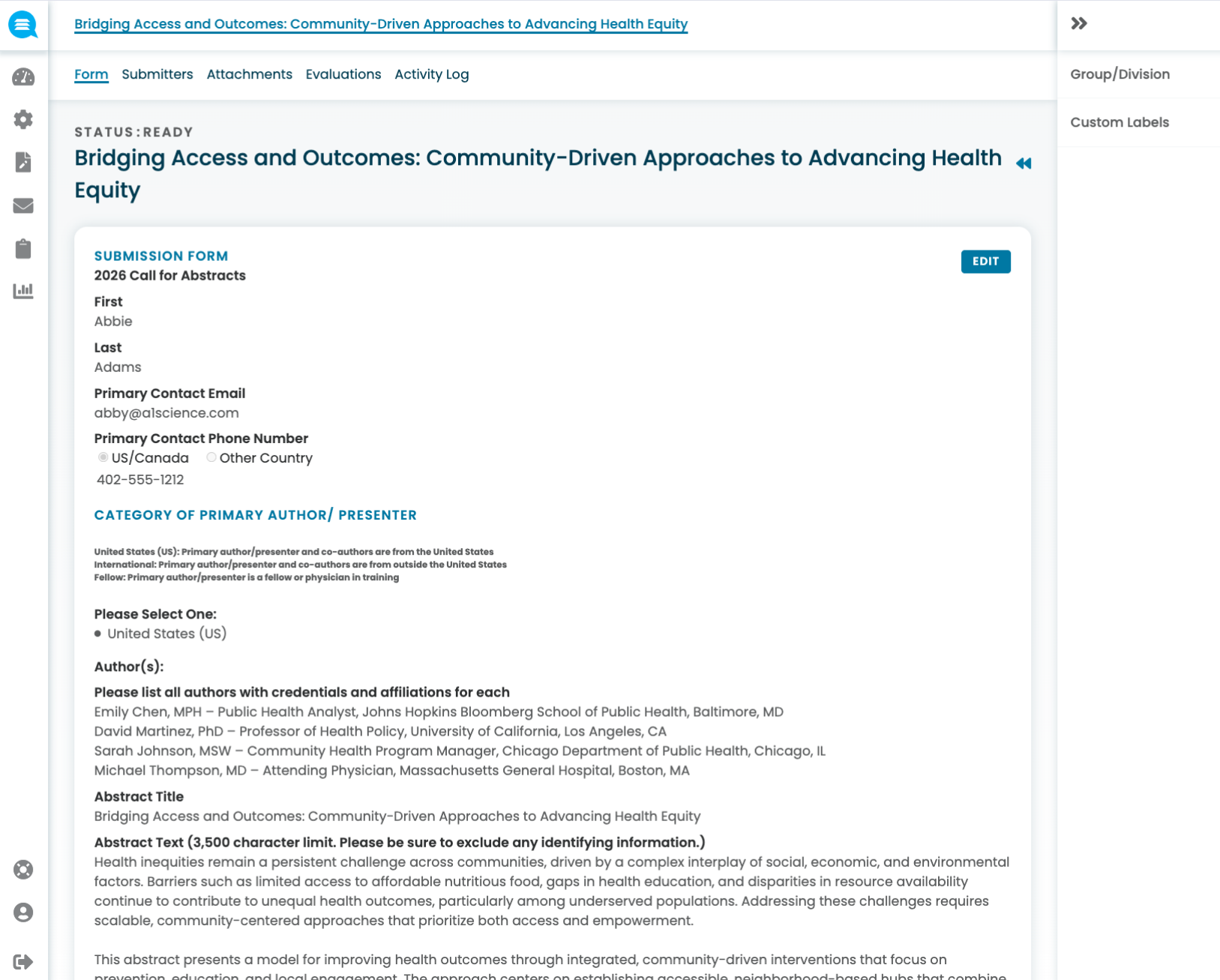

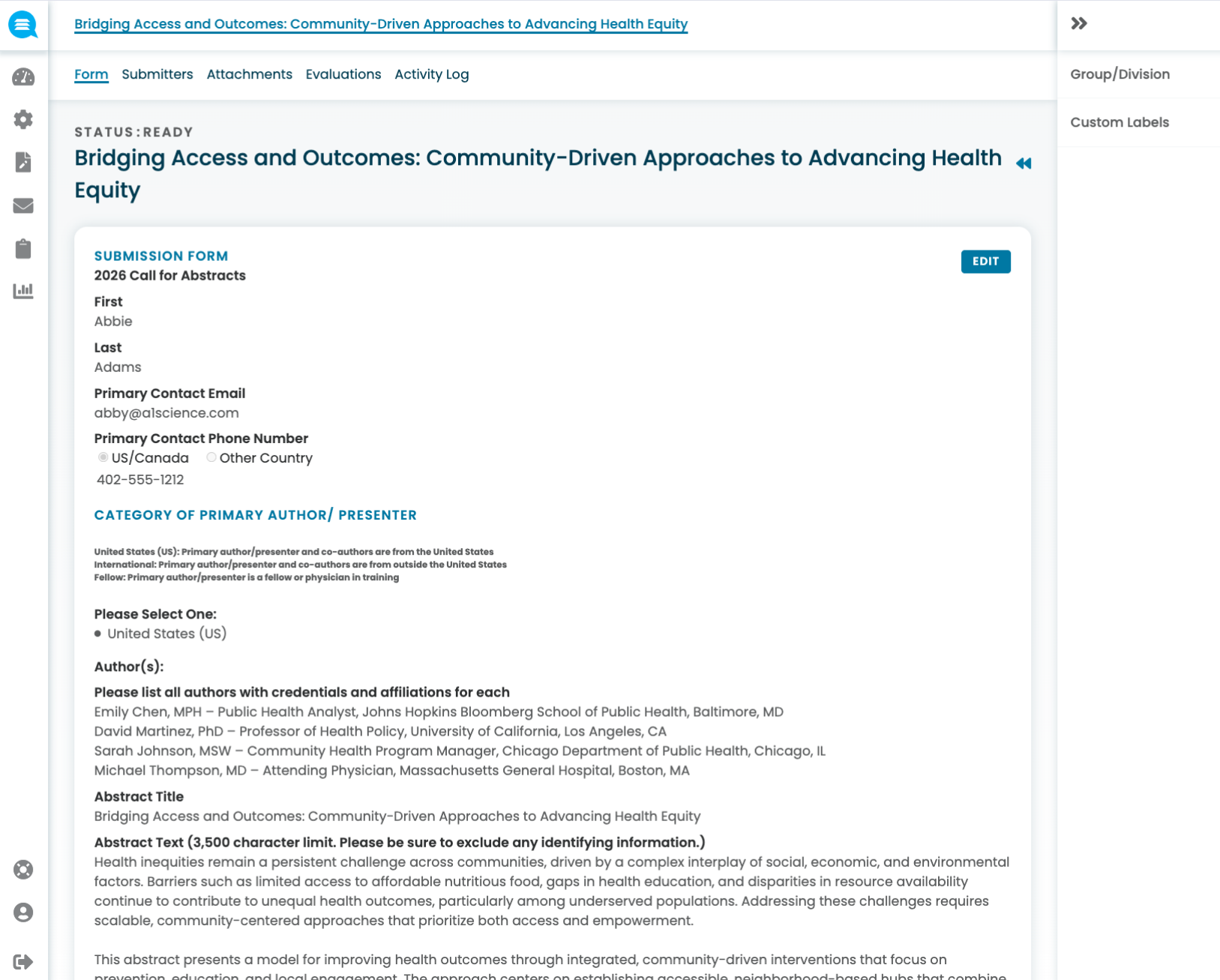

Knowing who submitted and managing potential conflicts of interest is essential — but most open calls collect submitter information in unstructured text fields with no conflict tracking. Reviewr treats submitter data and conflict disclosure as a structured part of the submission process from the start.

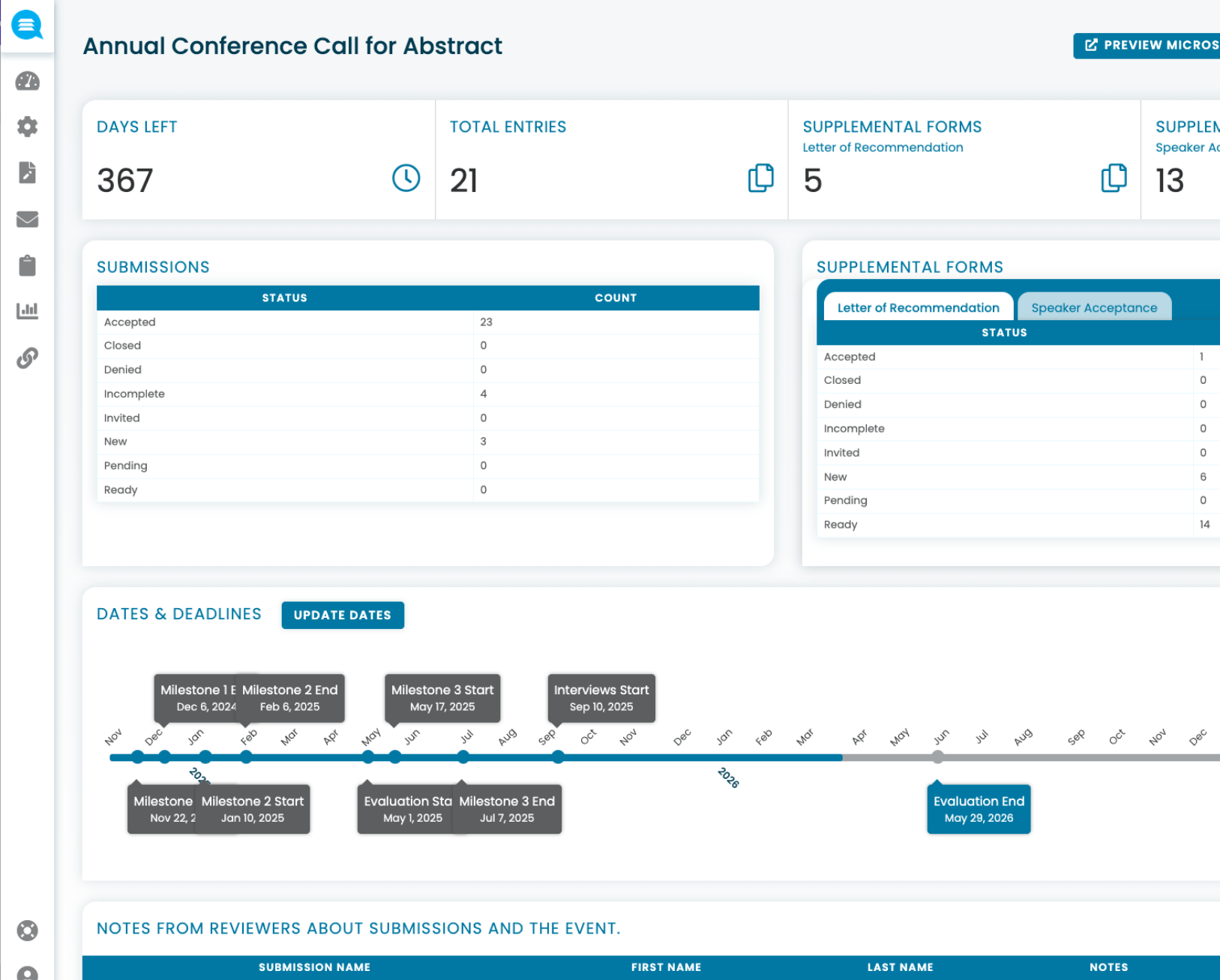

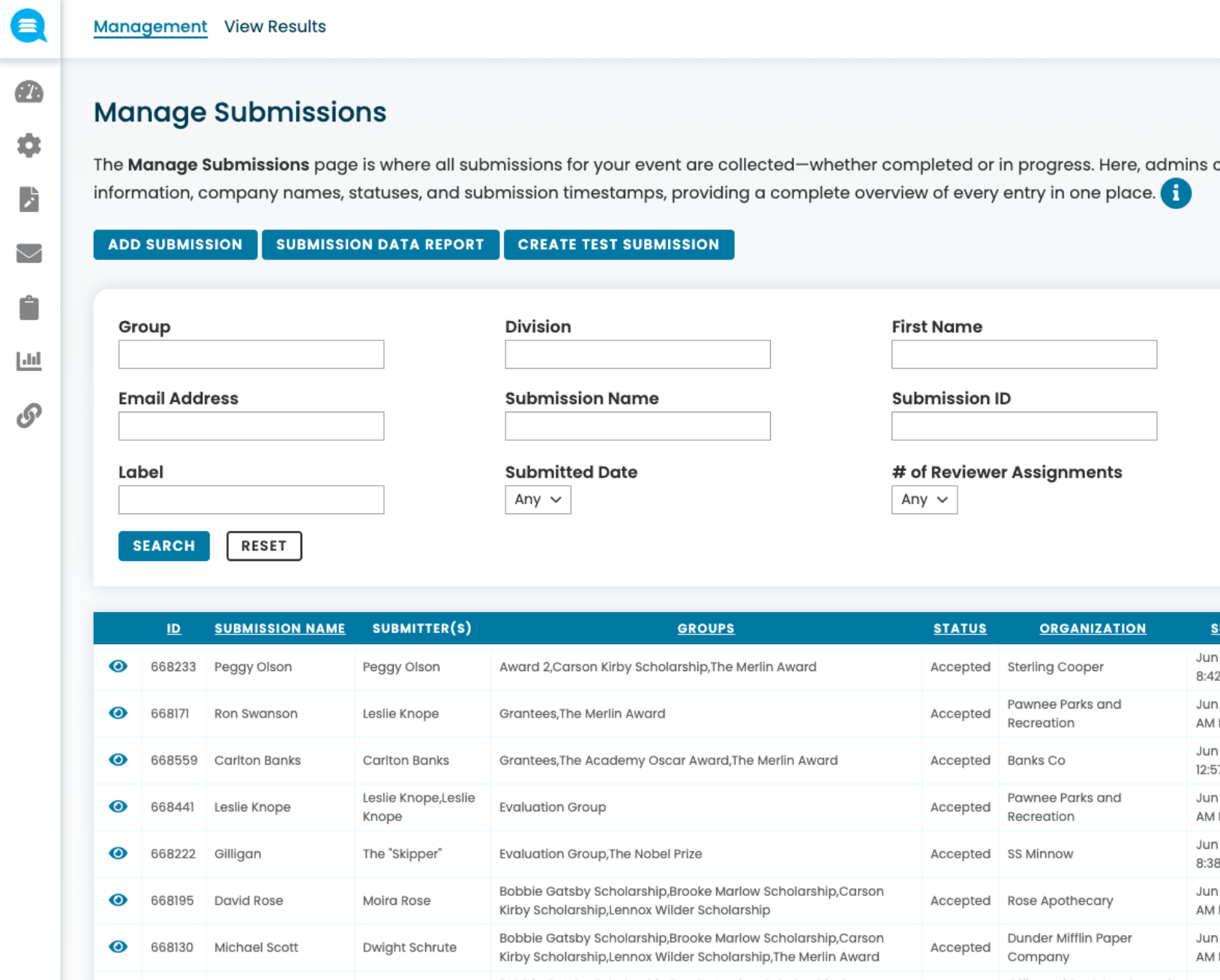

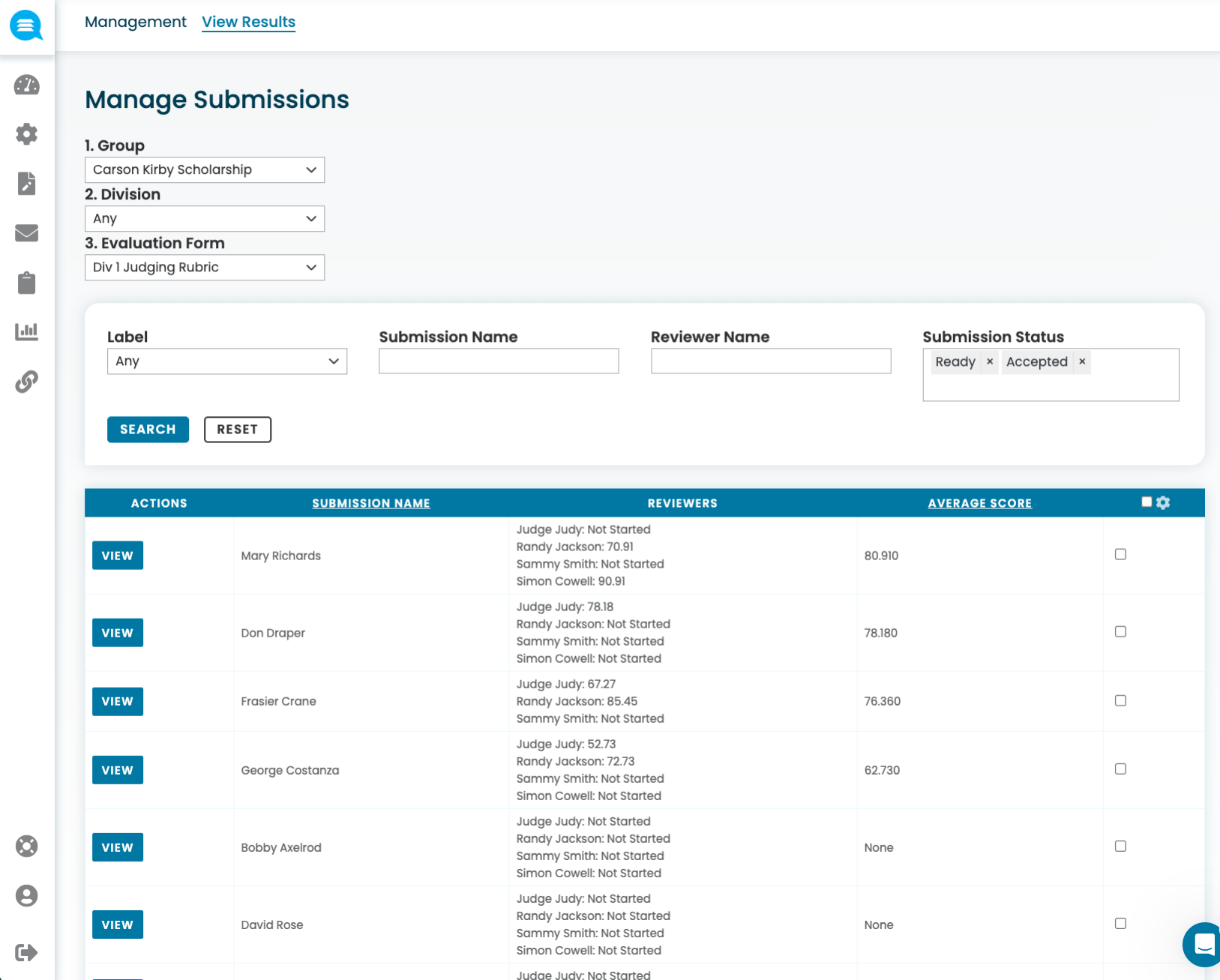

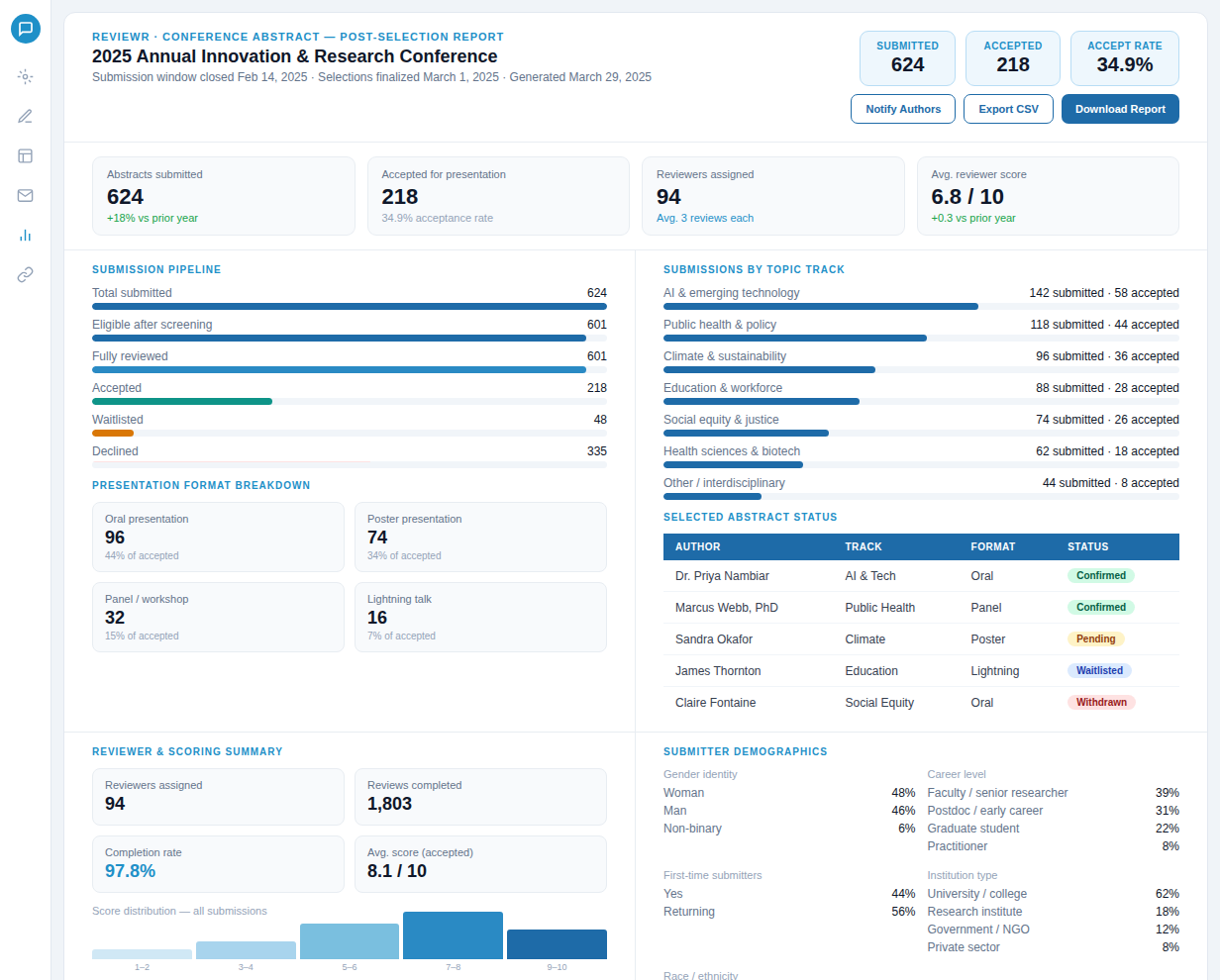

Managing hundreds or thousands of submissions across tracks is a logistics challenge. Most programs resort to spreadsheets, manual tagging, and reactive status tracking. Reviewr organizes submissions automatically as they arrive so you always know exactly where your program stands.

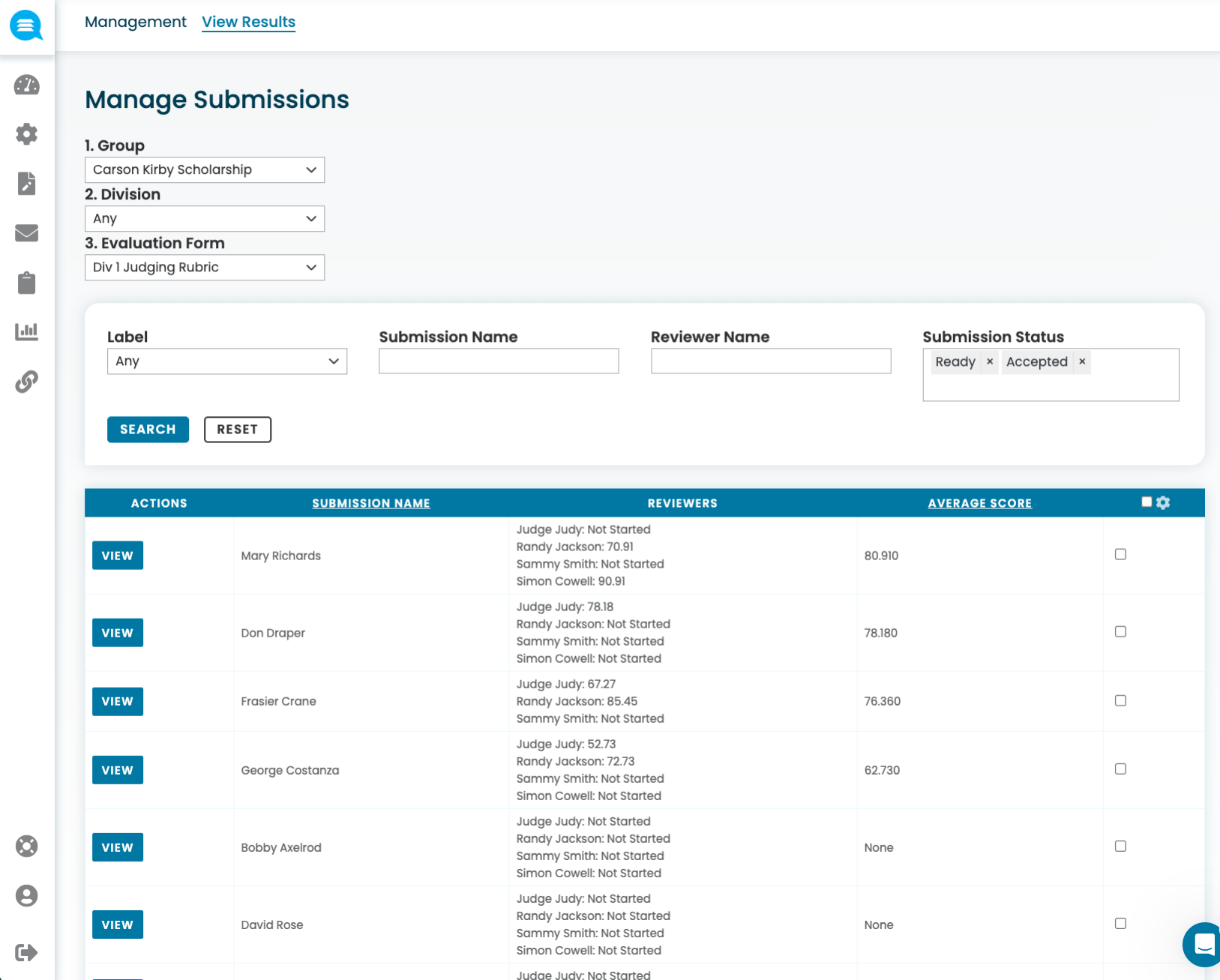

Scores are in. Now comes the work of finalizing selections and notifying submitters. Most teams do this manually — exporting scores, drafting individual emails, updating spreadsheets. Reviewr automates the entire post-review workflow so nothing falls through.

Selection isn't the end. Accepted submitters need to confirm participation, submit final deliverables, and meet any outstanding requirements. Most teams manage this over email with no tracking and no automation. Reviewr keeps the structure going after the decision is made.

Transform lengthy abstracts and proposals into concise reviewer briefs — pulling key research contributions, methodology highlights, and speaker credentials into a single overview so reviewers evaluate faster and more consistently.

Automatically detect strict and lenient scoring patterns across your program committee and normalize results — ensuring every proposal is evaluated on a level playing field regardless of which reviewer it was assigned to.

Use AI to match proposals to the right tracks and session formats automatically — reducing misplaced submissions and routing speakers where their content fits best.

Identify AI-generated content and flag plagiarized material across abstracts and proposals — protecting conference integrity and ensuring authentic submissions from every speaker.

Automatically redact speaker names, affiliations, and identifying information from proposals to enable blind review — without manual effort from program chairs.

Extract topics and themes from proposals automatically to identify emerging trends, cluster related sessions, and build coherent conference tracks — helping you organize a more cohesive program.

Yes. Reviewr supports multiple tracks, themes, and session formats in one call. Each track can have unique submission requirements, review criteria, and acceptance rates.

Yes. Reviewr supports multiple tracks, themes, and session formats in one call. Each track can have unique submission requirements, review criteria, and acceptance rates.

Speakers can add multiple co-authors and co-presenters directly in the submission form. You can designate a primary contact and track affiliations for all contributors.

Reviewr supports blind review, which hides speaker names, affiliations, and identifying information during the review phase. You control when and whether to reveal identities to your program committee.

Yes. After selections are made, Reviewr includes session scheduling tools with room assignments, conflict detection, and automated program book generation.

Yes. Reviewr is SOC 2 Type II certified and uses AES-256 encryption at rest and in transit. Your speaker and proposal data are secure.